How to Manage Connection Problems: The Definitive Systems Guide

How to manage connection problems in an era defined by the ubiquity of distributed systems and high-frequency data exchange, the stability of a network connection has moved from a technical luxury to a fundamental utility. Connectivity is the silent pulse of modern industry, governance, and domestic life. Yet, as the underlying architecture becomes increasingly abstracted—shifting from physical copper and fiber to the ephemeral layers of cloud virtualization and 5G edge computing—diagnosing a failure has become an exercise in multi-layered forensic analysis. When a system drops, the root cause could reside anywhere from a physical cable in a subterranean vault to a misconfigured BGP (Border Gateway Protocol) route halfway across the globe.

Managing these disruptions requires more than a reactionary checklist; it demands a systemic understanding of how data moves across different protocols and the specific failure points inherent in each. The complexity is compounded by the fact that “connection” is a broad term, encompassing local area networks (LAN), wide-area enterprise tunnels (SD-WAN), and the intricate handshake between software APIs. In each of these domains, a “problem” can manifest as total blackout, high latency (lag), or packet loss, each requiring a distinct diagnostic lens and a different set of resolution tools.

This article serves as a definitive architectural reference for managing the inevitable friction of our hyper-connected world. We will move beyond the superficial “reboot your router” advice to explore the structural dynamics of network engineering, the psychological and operational cost of downtime, and the rigorous governance frameworks required to maintain high-availability systems. By shifting the perspective from reactive repair to proactive system management, we aim to provide the mental models necessary to navigate a landscape where connectivity is constant, but stability is hard-won.

Understanding “how to manage connection problems”

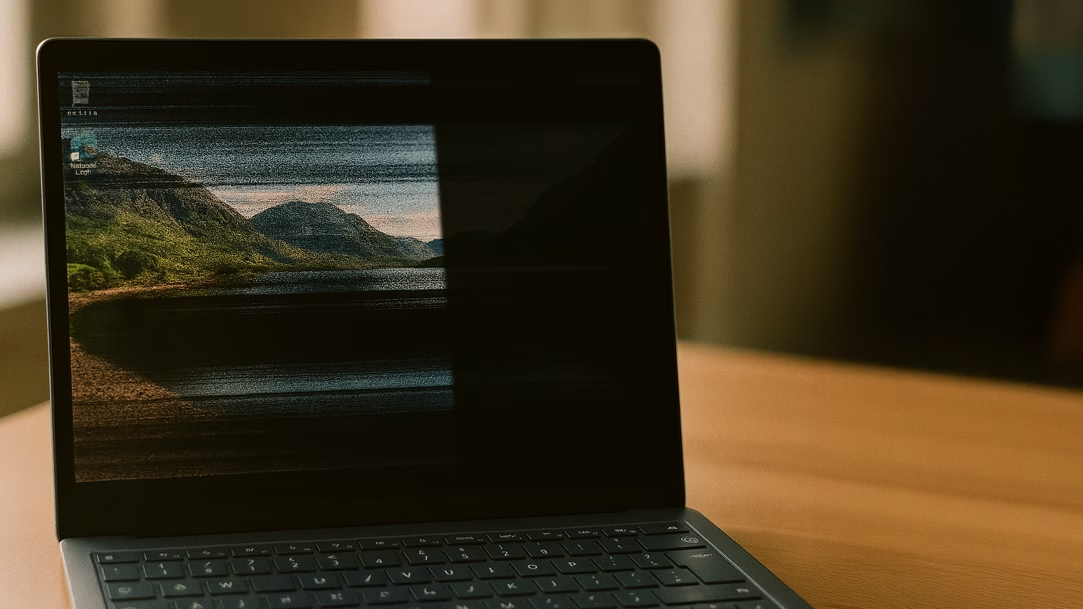

To master how to manage connection problems, one must first accept that connectivity is not a binary state. In high-performance environments, “connected” but “unusable” is a common and often more frustrating state than a total outage. This nuance is where most standard troubleshooting fails. From a systemic perspective, managing these issues involves identifying where the breakdown occurs within the OSI (Open Systems Interconnect) model, ranging from physical hardware (Layer 1) to the application software (Layer 7).

How to manage connection problems the primary risk in managing connectivity is oversimplification. Many administrators and users fall into the trap of “single-point bias,” assuming the fault lies with the most visible component—the Wi-Fi router or the service provider. In reality, modern connections are a “daisy chain” of dependencies. A DNS (Domain Name System) failure, for instance, looks exactly like an internet outage to the end-user, even if the physical line is perfectly healthy. Without a tiered approach to diagnostics, hours can be wasted fixing components that were never broken.

Furthermore, the scale of the environment dictates the strategy. Managing a connection for a remote worker in a rural area involves variables like satellite latency and signal attenuation, whereas managing connectivity for a multi-national data center involves managing load balancers, redundant fiber paths, and peering agreements. The core of effective management lies in “isolating the segment”—systematically testing sections of the data path until the anomaly is cornered.

Contextual Background: The Evolution of Digital Handshakes

The history of connection problems is a history of increasing abstraction. In the early days of telephony and dial-up, the connection was physical and linear. A broken wire meant no signal. Diagnostics were manual and electrical. As we moved into the broadband era, the “always-on” expectation emerged, shifting the focus from establishing a connection to maintaining its quality.

The Rise of the Cloud and Virtualization

The shift to cloud computing in the 2010s added a layer of “logical” connection problems. Now, a server might be physically “up,” but the virtual network interface (VNI) might be misconfigured. Connectivity became a software-defined asset. This increased flexibility but also introduced “invisible” failure points where software updates or security certificates could suddenly sever a connection without any change in the hardware.

The 5G and IoT Explosion

Today, the density of connections has reached a tipping point. We are no longer just connecting computers; we are connecting sensors, cars, and appliances. This has shifted the “problem” landscape from bandwidth (speed) to congestion and interference. In many urban environments, managing connection problems is now less about “finding a signal” and more about “filtering out the noise” from thousands of competing devices.

Conceptual Frameworks: Mental Models for Diagnostics How To Manage Connection Problems

Experienced engineers do not troubleshoot randomly; they use mental models to navigate the complexity of the network.

1. The “Divide and Conquer” Model

This is the most fundamental diagnostic strategy. If a system fails, you test the middle point of the chain. If the middle point works, the problem is in the second half; if it fails, it is in the first half. By repeatedly bisecting the network path, you can find a fault in a global network in just a few steps.

2. The Dependency Map

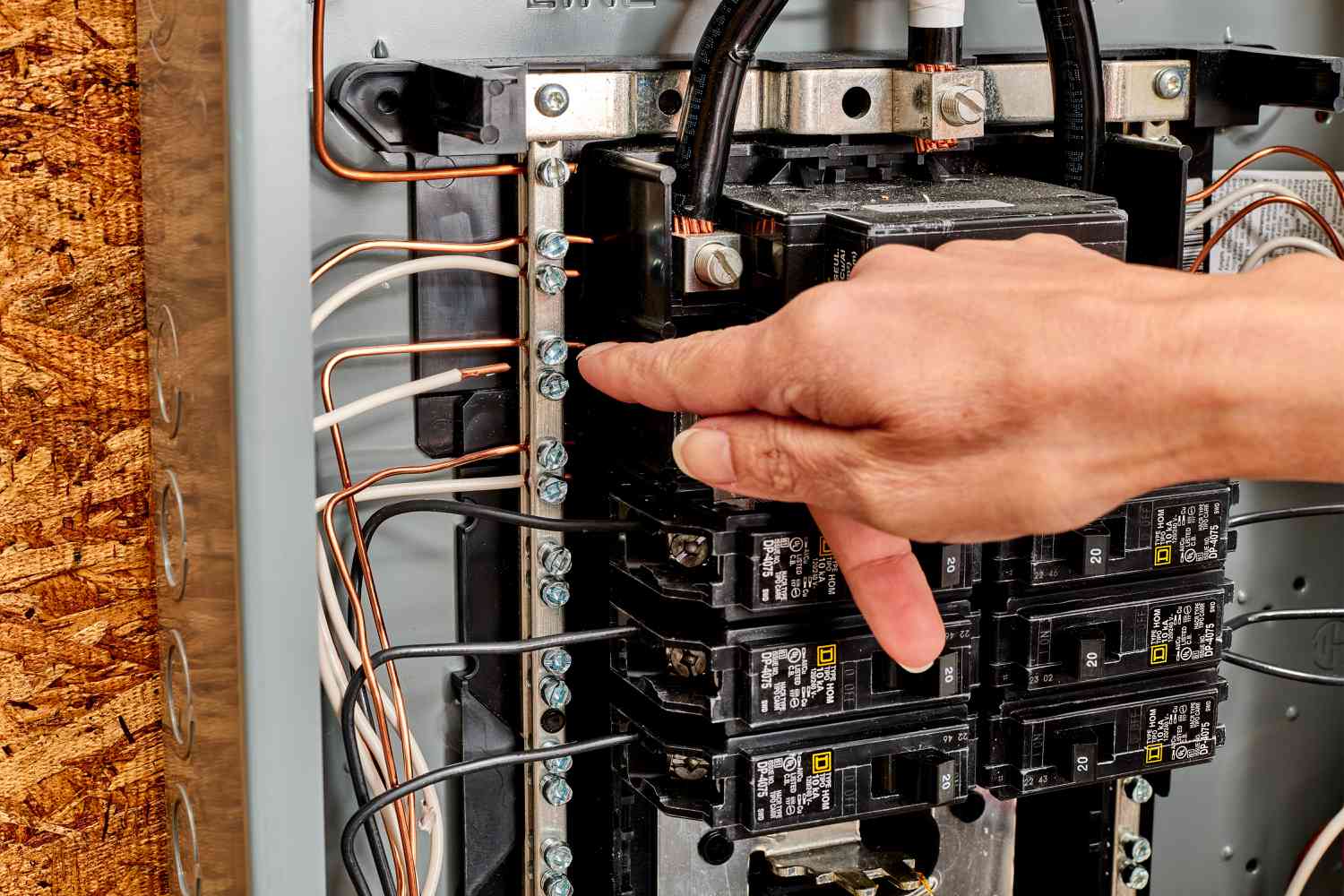

Every connection relies on a stack of prerequisites: Power $\rightarrow$ Physical Link $\rightarrow$ IP Address $\rightarrow$ DNS $\rightarrow$ Authentication. This framework ensures that you don’t waste time checking a software login if the router doesn’t have an IP address. It enforces a “bottom-up” diagnostic discipline.

3. The Signal-to-Noise Ratio (SNR) Thinking

In wireless and high-speed copper environments, the problem is often not a “broken” link but a “dirty” one. This framework views every connection as a battle between the data signal and environmental noise. It shifts the solution from “increasing power” (which often increases noise) to “improving isolation.”

Key Categories of Connectivity Failure

Managing failures requires categorizing them by their behavior and their trade-offs.

| Category | Typical Symptom | Primary Cause | Management Strategy |

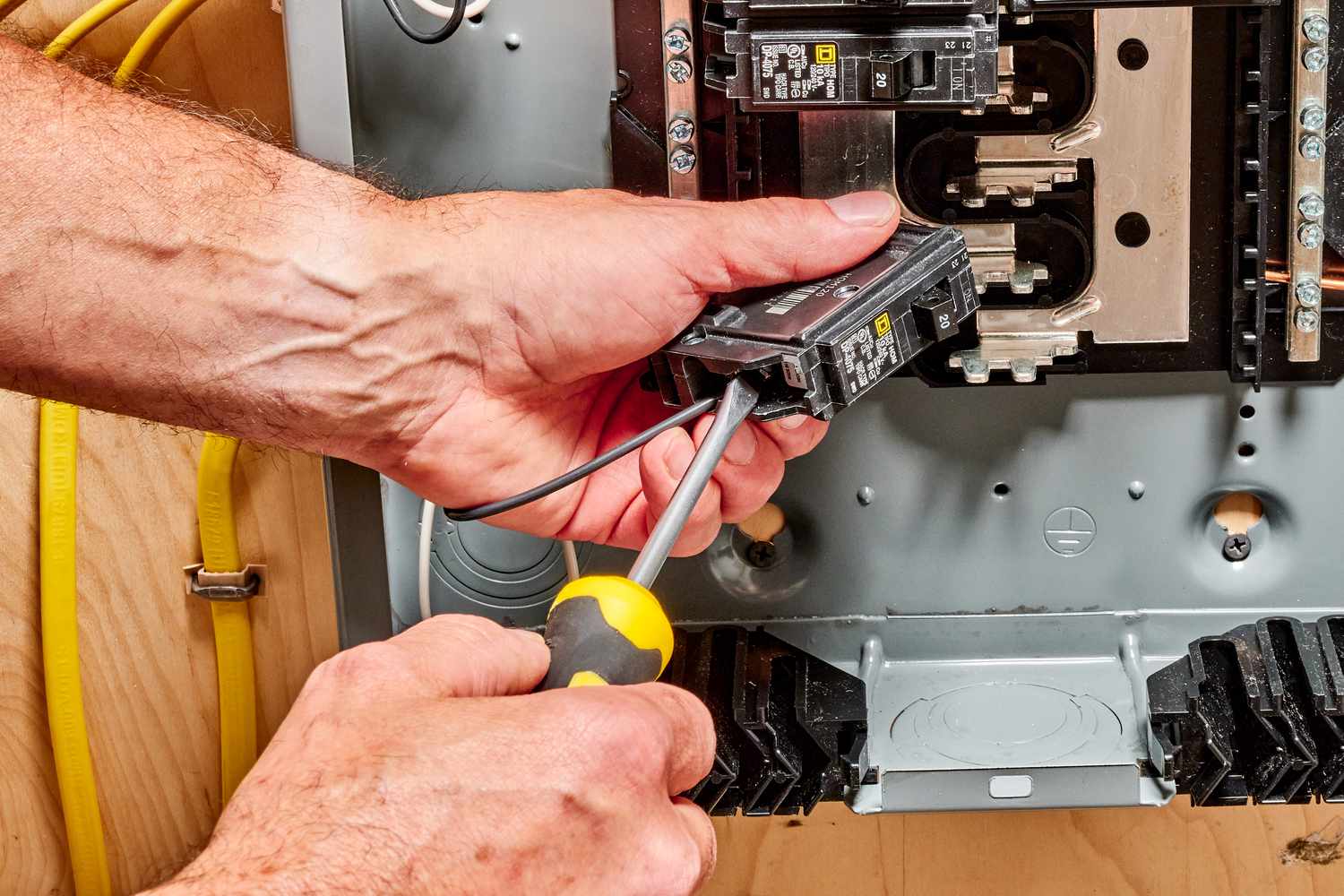

| Physical (Layer 1) | No Link / Red Light | Cut cables, bad ports, power loss | Physical inspection/Redundancy |

| Congestion | Slow speeds, high lag | Too many users, limited bandwidth | Quality of Service (QoS) / Throttling |

| Logic/Config | “Connected, no internet” | Misconfigured DNS, IP conflicts | IP refresh / DNS flush |

| Interference | Intermittent drops | Microwaves, walls, radio noise | Frequency hopping / Shielding |

| Authentication | Login loops | Expired certificates, MFA lag | Token reset / Server sync |

| ISP/External | Total regional outage | Provider backhaul failure | Failover to cellular/Secondary ISP |

Realistic Decision Logic

If the connection is lost, the first question should always be: “Is this isolated to one device or the whole network?” If it’s one device, the problem is local (drivers, NIC). If it’s the whole network, the problem is upstream (router, modem, ISP). This single binary choice eliminates 50% of the variables instantly.

Detailed Real-World Scenarios How To Manage Connection Problems

The “Jittery” Video Conference

-

The Constraint: The user has high-speed fiber but experience “freezing” during calls.

-

The Diagnostic: A standard speed test shows 500Mbps, but a “Ping” test shows “Jitter” (variability in delay).

-

The Cause: Bufferbloat on the local router. The router is trying to handle too much data at once, causing a backlog.

-

The Resolution: Implementing “Smart Queue Management” (SQM) or prioritizing video traffic over background downloads.

The Warehouse Wi-Fi Dead Zone

-

The Constraint: Handheld scanners lose connection in the center of a large distribution center.

-

The Diagnostic: Signal strength is high at the edges but drops suddenly near the pallet racks.

-

The Cause: Multi-path interference. The metal racks are reflecting the Wi-Fi signal, causing the waves to cancel each other out.

-

The Resolution: Moving to a 5GHz or 6GHz band which has shorter wavelengths and less “bounce,” or installing directional antennas.

The Remote Office VPN Collapse

-

The Constraint: A satellite office cannot reach the main server, though they can browse the public web.

-

The Diagnostic: Traceroute shows the data reaches the main office gateway but stops there.

-

The Cause: MTU (Maximum Transmission Unit) mismatch. The VPN adds “overhead” to the data packets, making them too large for the ISP’s limits, causing them to be dropped.

-

The Resolution: Lowering the MTU size on the VPN tunnel to ensure the “wrapped” packets fit through the ISP’s pipe.

Planning, Cost, and Resource Dynamics How To Manage Connection Problems

The cost of connectivity is not just the monthly subscription; it is the “cost of failure.”

Cost Analysis of Connectivity Resilience

| Tier | Investment | Primary Resource | Value Proposition |

| Standard | $100/mo | Single ISP, consumer router | Low cost, high risk |

| Resilient | $300/mo | Dual ISP (Cable + 5G), Prosumer router | Automatic failover |

| Enterprise | $2,000+/mo | Dedicated Fiber, SD-WAN, On-site IT | 99.99% Uptime, SLA guarantees |

The opportunity cost of “bad” management is human productivity. If a 100-person office loses connection for one hour, the loss is not just the $50 of “internet time,” but the 100 hours of salary and the disruption of momentum. Investing in redundant paths—such as a Starlink backup for a fiber-based office—often pays for itself in a single averted outage.

Tools, Strategies, and Support Systems

To effectively how to manage connection problems, one must utilize a professional toolkit.

-

Ping & Traceroute: The “hammer and screwdriver” of networking. Ping tests reachability; Traceroute shows every “hop” along the path, revealing exactly where the signal dies.

-

Wi-Fi Analyzers: Software that visualizes the “airwaves,” showing which channels are crowded by neighbors.

-

DNS Flush: A strategy to clear the “address book” of the computer, forcing it to find the newest path to a website.

-

Loopback Testing: Testing the device against itself to rule out internal hardware failure.

-

Packet Sniffers (Wireshark): For deep forensics—looking at the actual “bits” of data to see if they are being corrupted or rejected by a firewall.

-

Cellular Failover: Using a 4G/5G modem that only activates when the main line goes dark.

-

SLA (Service Level Agreement) Monitoring: Tools that log every second of downtime to hold ISPs accountable for their uptime promises.

Risk Landscape and Compounding Failure Modes How To Manage Connection Problems

Connection problems rarely exist in isolation. They often trigger a “cascade” of failures.

-

The “Retry” Storm: When a connection is slow, software often tries to reconnect repeatedly. This “storm” of requests can overwhelm the router, turning a slow connection into a dead one.

-

The Security Trade-off: Increasing security (firewalls, deep packet inspection) adds latency. In high-frequency environments, a “safe” connection might be a “useless” one.

-

Thermal Throttling: Network equipment generates heat. In many server closets, connection “drops” are actually the hardware shutting down to prevent melting.

-

The “Hidden Node” Problem: In Wi-Fi, two devices that can’t “see” each other both try to talk to the router at once, causing “collisions” that destroy data.

Governance, Maintenance, and Long-Term Adaptation

Effective management is a cycle, not a one-time fix.

The Connectivity Maintenance Lifecycle

-

Weekly: Check for firmware updates for routers and access points.

-

Monthly: Review “Usage Logs” to identify emerging bottlenecks before they cause a crash.

-

Quarterly: A “Chaos Test”—manually unplugging the primary line to see if the backup systems actually work.

-

Annually: Physical inspection of cables for UV damage, rodent interference, or corrosion.

The Layered Checklist for Adaptation

-

Inventory: Do we know every device connected to the network?

-

Redundancy: If the “A” path fails, is there a “B” path?

-

Documentation: Is the network map written down, or is it only in the IT person’s head?

Measurement, Tracking, and Evaluation How To Manage Connection Problems

You cannot manage what you do not measure.

-

Latency (Ping): Measured in milliseconds (ms). <30ms is great; >150ms is problematic for real-time work.

-

Packet Loss: Should be 0%. Even 1% loss can cause streaming and voice calls to stutter.

-

Throughput (Speed): The volume of data. Important for downloads, but often less important than latency for daily work.

-

MTBF (Mean Time Between Failures): A measure of how “stable” the system is over months.

-

MTTR (Mean Time To Repair): A measure of how “efficient” the diagnostic process is.

Documentation Example: The Incident Log

| Date | Symptom | Root Cause | Resolution | Time to Fix |

| 03/10 | VPN Drop | MTU Mismatch | Reduced MTU to 1400 | 45 min |

| 03/12 | Slow Wi-Fi | Channel Overlap | Switched to Ch 161 | 10 min |

Common Misconceptions and Oversimplifications

-

“More bars means better internet.” Bars represent signal strength, not signal quality. You can have full bars of a “noisy” signal that is unusable.

-

“I have 1000Mbps, so I shouldn’t lag.” Speed is how much water fits in the pipe; lag (latency) is how fast the water moves. A big pipe can still have “slow” water.

-

“Ethernet is always better.” While usually true, a 20-year-old Cat5 cable is significantly slower than modern Wi-Fi 6E.

-

“Public Wi-Fi is just ‘slow’.” It’s often not slow; it’s “captured.” The login page (captive portal) is often the part that is broken, not the internet itself.

-

“Restarting fixes everything.” It fixes “state” errors, but it hides “environmental” errors. If you have to restart daily, you have a systemic problem that a reboot won’t solve.

-

“Fiber is invincible.” Fiber is faster, but it is much more fragile than copper. A sharp bend in a fiber optic cable can “leak” light, causing intermittent errors that are incredibly hard to find.

Conclusion

The ability to how to manage connection problems is becoming a core competency for the modern professional and the resilient organization. As we have seen, the “connection” is a multi-layered construct, vulnerable to everything from physical interference to logical misconfigurations. The shift from a reactive “fix-it” mindset to a systemic “governance” mindset is what separates a frustrating digital environment from a seamless one.

The most robust systems are not those that never fail, but those that fail “loudly” (providing clear diagnostics) and “safely” (falling back to redundant paths). By applying tiered diagnostic models, maintaining a clean signal environment, and documenting the network’s evolution, we can transform connectivity from a source of anxiety into a reliable foundation for innovation. In the end, managing the connection is about managing the flow of human intent through the digital ether.